|

There are several different measures for the degree of correlation in data, depending on the kind of data: principally whether the data is a measurement, ordinal, or categorical. As tools of analysis, correlation coefficients present certain problems, including the propensity of some types to be distorted by outliers and the possibility of incorrectly being used to infer a causal relationship between the variables (for more, see Correlation does not imply causation). They all assume values in the range from −1 to +1, where ☑ indicates the strongest possible correlation and 0 indicates no correlation. Several types of correlation coefficient exist, each with their own definition and own range of usability and characteristics. The variables may be two columns of a given data set of observations, often called a sample, or two components of a multivariate random variable with a known distribution. However, the actual value of r might be the negative number −0.8.Numerical measure of a statistical relationship between variablesĪ correlation coefficient is a numerical measure of some type of correlation, meaning a statistical relationship between two variables. Taking the square root of a positive number with any calculating device will always return a positive result. To see what can go wrong, suppose r 2 = 0.64. What should be avoided is trying to compute r by taking the square root of r 2, if it is already known, since it is easy to make a sign error this way. Typically one would make the choice based on which quantities have already been computed. Any one of the defining formulas can also be used. The coefficient of determination r 2 can always be computed by squaring the correlation coefficient r if it is known. The actual value of r before rounding is 0.8186864772, which when squared gives the value for r 2 obtained here. The discrepancy between the value here and in the previous example is because a rounded value of r from Note 10.19 "Example 3" was used there.

In Note 10.24 "Example 5" in Section 10.4 "The Least Squares Regression Line" we computed the exact value S S E = 28.946 In Note 10.19 "Example 3" in Section 10.4 "The Least Squares Regression Line" we computed the exact values S S x x = 14 S S x y = − 28.7 S S y y = 87.781 β ^ 1 = − 2.05 Use each of the three formulas for the coefficient of determination to compute its value for the example of ages and values of vehicles. Thus the coefficient of determination is denoted r 2, and we have two additional formulas for computing it. In the context of linear regression the coefficient of determination is always the square of the correlation coefficient r discussed in Section 10.2 "The Linear Correlation Coefficient". Seen in this light, the coefficient of determination, the complementary proportion of the variability in y, is the proportion of the variability in all the y measurements that is accounted for by the linear relationship between x and y. We can think of S S E ∕ S S y y as the proportion of the variability in y that cannot be accounted for by the linear relationship between x and y, since it is still there even when x is taken into account in the best way possible (using the least squares regression line remember that S S E is the smallest the sum of the squared errors can be for any line). In particular, the proportion of the sum of the squared errors for the line y ^ = y - that is eliminated by going over to the least squares regression line is S S y y − S S E S S y y = S S y y S S y y − S S E S S y y = 1 − S S E S S y y A measure of how useful it is to use the regression equation for prediction of y is how much smaller S S E is than S S y y.

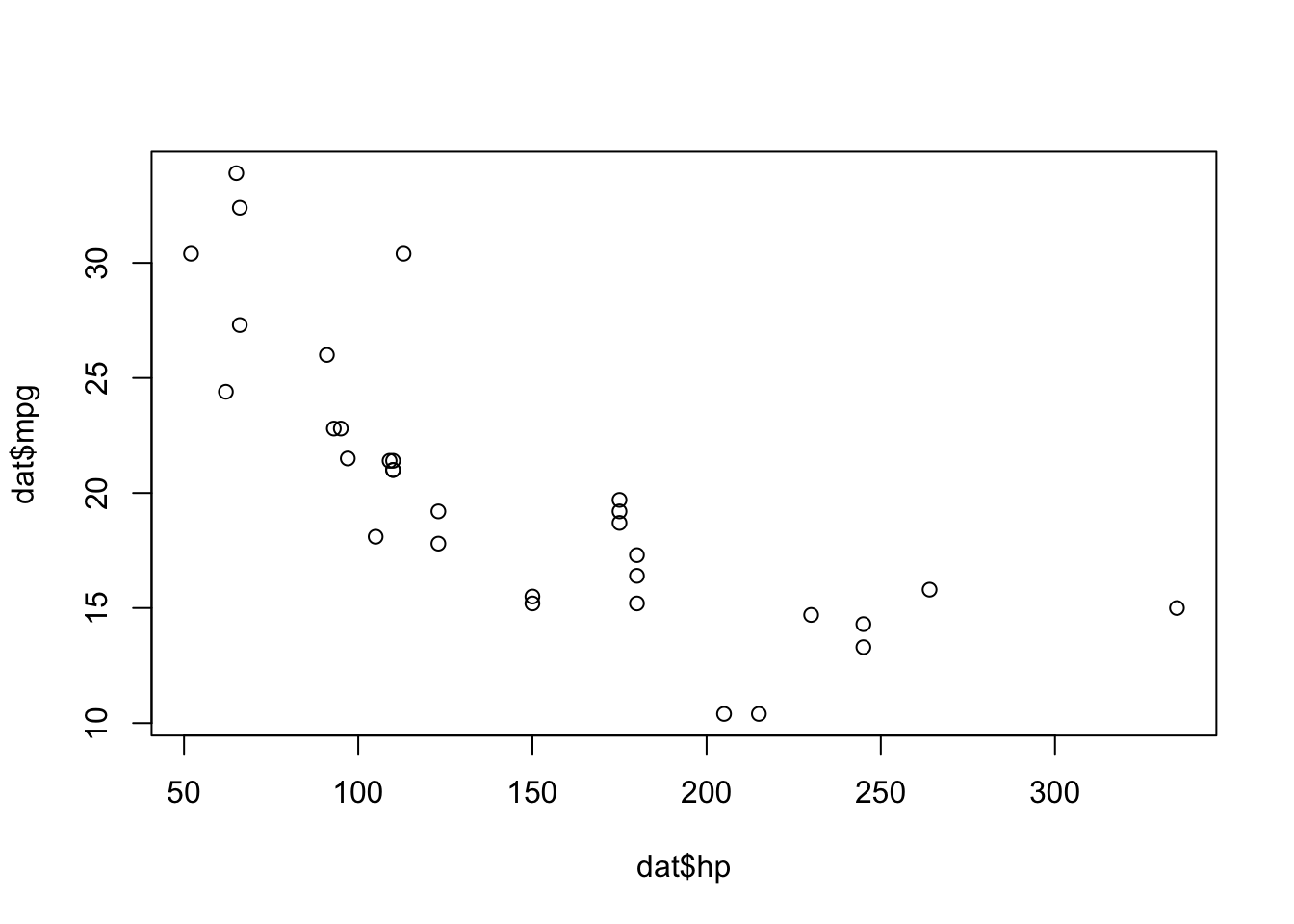

In particular it is less than the sum of the squared errors computed using the line y ^ = y -, which sum is actually the number S S y y that we have seen several times already. The sum of the squared errors computed for the regression line, S S E, is smaller than the sum of the squared errors computed for any other line. Figure 10.12 Same Scatter Diagram with Two Approximating Lines

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed